Last Updated on March 12, 2026 by Craig Allen Keefner

What It Means for Healthcare Kiosks

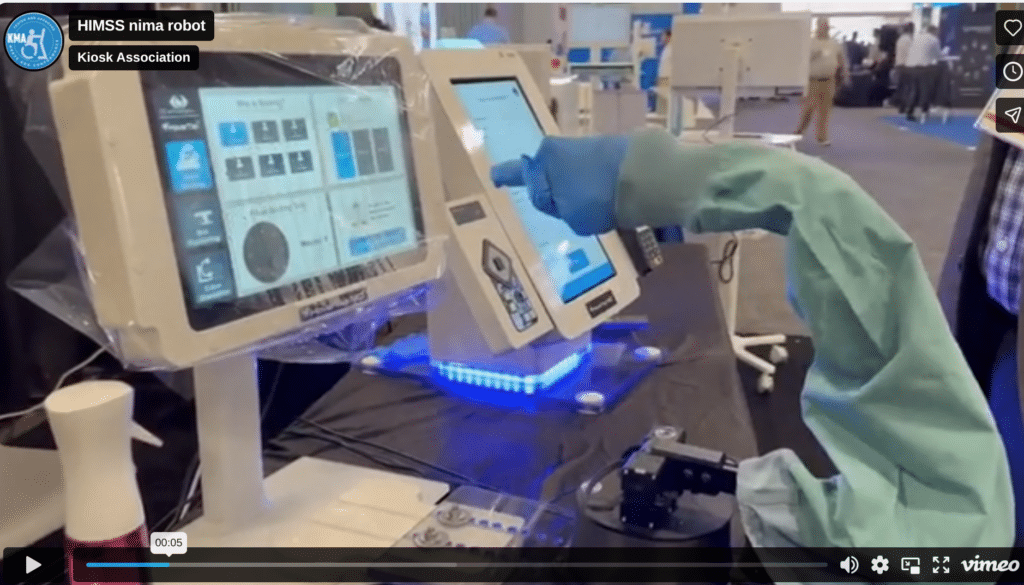

Healthcare kiosks have spent the last decade optimizing the touchscreen experience—better UI design, faster workflows, and mobile-first patient journeys. But a demonstration circulating around HIMSS this year suggests the next interface layer may eliminate the screen interaction entirely.

Researchers demonstrated a robot designed to teach artificial intelligence how to interpret human gestures. Using cameras and motion tracking, the system observes hand movements, pointing behavior, and body orientation, converting those signals into training data for machine learning models.

The objective is simple but powerful: build systems that can understand what a person intends without requiring them to touch a screen or speak a command.

Why This Matters for Self-Service Kiosks

For anyone deploying patient-facing technology, gesture recognition could become a critical component of the next generation of hospital self-service systems.

Instead of tapping buttons on a kiosk screen, patients might:

-

Point toward a department to trigger wayfinding directions

-

Raise a hand to initiate check-in

-

Use simple gestures to navigate menus

-

Confirm selections through visual cues rather than touch

These types of interfaces are particularly valuable in healthcare environments where hygiene, accessibility, and ease of use are top priorities.

Touchless interaction was a pandemic-era requirement. Now it is evolving into a design philosophy for hospital digital front doors.

The Technology Stack Behind Gesture Interfaces

Gesture recognition systems rely on a combination of technologies already appearing in kiosk deployments:

Computer Vision Cameras

Depth cameras and RGB sensors track movement patterns.

Edge AI Processing

Machine learning models analyze motion data locally to avoid latency.

Behavior Classification Models

Neural networks translate raw movement into defined commands.

Integration with Kiosk Software

Gesture signals map to UI actions such as menu navigation, check-in confirmation, or wayfinding prompts.

As edge processors continue improving—particularly with AI accelerators and NPUs—gesture recognition is becoming practical in embedded kiosk hardware rather than requiring cloud processing.

Accessibility Benefits

Gesture-based interaction could also help address one of the biggest challenges in kiosk design: universal accessibility.

Many patients struggle with traditional touchscreen workflows due to:

-

limited mobility

-

vision impairment

-

unfamiliarity with digital interfaces

-

language barriers

Gesture interaction offers an alternative control layer that can work alongside voice AI and traditional touch interfaces, creating a more inclusive patient experience.

The Broader Shift Toward Natural Interfaces

Gesture recognition is part of a larger trend in self-service technology toward natural human-machine interaction.

Three interface layers are rapidly converging:

-

Voice AI – Conversational ordering and assistance

-

Computer Vision – Facial recognition and identity verification

-

Gesture Recognition – Non-verbal control of systems

Together these technologies move kiosks away from rigid menu navigation and toward human-centered interaction models.

For hospitals, this could redefine how patients interact with digital systems across the campus—from check-in and wayfinding to service robots and automated assistance stations.

Watch the Demonstration

The gesture-recognition training robot featured in this discussion is shown in the video and analysis published on PatientKiosk.io.

👉 Full article and video:

Patient Kiosk – HIMSS Demo Robot Teaching AI Gesture Recognition

https://patientkiosk.io/himss-demo-robot-teaching-ai-gesture-recognition/

TIG Executive Perspective

The self-service industry is entering a phase where interfaces become invisible.

Instead of learning how to operate machines, users will increasingly interact with systems the same way they interact with other humans—through voice, motion, and visual cues.

For kiosk deployers and healthcare IT leaders, the real question is no longer whether touchless interaction will arrive.

It is how quickly natural interfaces will replace the touchscreen as the primary control layer for patient-facing systems.

AI Interfaces: Voice, Vision, and Gesture

- AI Beyond The Cloud

- Healthcare– Modernizing the patient and staff journey. Discover the hardware and compliance standards.

- Edge AI – Eliminate cloud latency and protect data privacy by processing computer vision and natural language locally

End of Content